Nevertheless, the statement does show the way to proceed. No procedure for selecting data is ever going to guarantee the mathematical properties of our models. Notice what is happening here: what started out as a property of probability distributions has now become a prescription for obtaining data in a way that makes it plausible that we can assume independence for the probability distributions that we imagine govern our data. Independent samples are samples that are selected randomly so that its observations do not depend on the values other observations. Relating the mathematical concept to a real world situation requires a clear idea of the population of interest, considerable domain expertise, and a mental slight of hand that is nicely exposed in the short article What are independent samples?, by the Minitab® folks. “Independent data” or “independent samples” are both shorthand for data sampled or otherwise resulting from independent probability distributions. That is: B has no influence on whether A happens.

If A and B are independent then P(A|B) = P(A). In general, the probability of A happening given that B happens is defined to be: That is: P(AB) = P(A)P(B).Ī more intuitive way to think about it is in terms of conditionally probability. Two events A and B are said to be independent events if the probability of both A and B happening equals the product of the probabilities of A and B happening. So, what do we mean by independent samples or independent data, and how do we go about verifying it? Independence is a mathematical idea, an abstraction from probability theory. It often involves considerable creative thinking and tedious legwork. Checking for independence is the difference between doing statistics and carrying out a mathematical or maybe just a mechanical exercise. Independence, on the other hand can be a show stopper. The whole test depends on it, but this assumption is baked into the software that will run the test.

is very important, but it is relatively easy to check, and the t-test is robust enough to deal with some deviation from normality. There are other tests and workarounds for the situations where 4. However in my opinion, from the point of view of statistical practice, assumption 2.

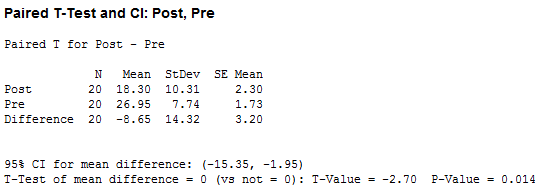

( of the MIT Open Courseware notes Null Hypothesis Significance Testing II contains an elegantly concise mathematical description of the t-test.)Īll of the above assumptions must hold, or be pretty close to holding for the test to give an accurate result. On the other hand, if the test statistic does fall in the rejection region, then we reject the \(H_0\) and conclude that our data along with the the bundle of assumptions we made in setting up the test, and the “steel trap” logic of the t-test itself provide some evidence that the population means are different.

If we compute the test statistic and its value does not fall in the rejection region, then we do not reject \(H_0\) and we conclude that we have found nothing. This region depends on the particular circumstances of the test, and is selected to balance the error of rejecting \(H_0\) when it is true against the error of not rejecting \(H_0\) when it is false. Next, a test statistic that includes the difference between the two sample means is calculated, and a decision is made to establish a “rejection region” for the test statistic.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed